The Noncommuting-Charges World Tour (Part 4 of 4)

This is the final part of a four-part series covering the recent Perspective on noncommuting charges. I’ve been posting one part every ~5 weeks leading up to my PhD thesis defence. You can find Part 1 here, Part 2 here, and Part 3 here.

In four months, I’ll embark on the adventure of a lifetime—fatherhood.

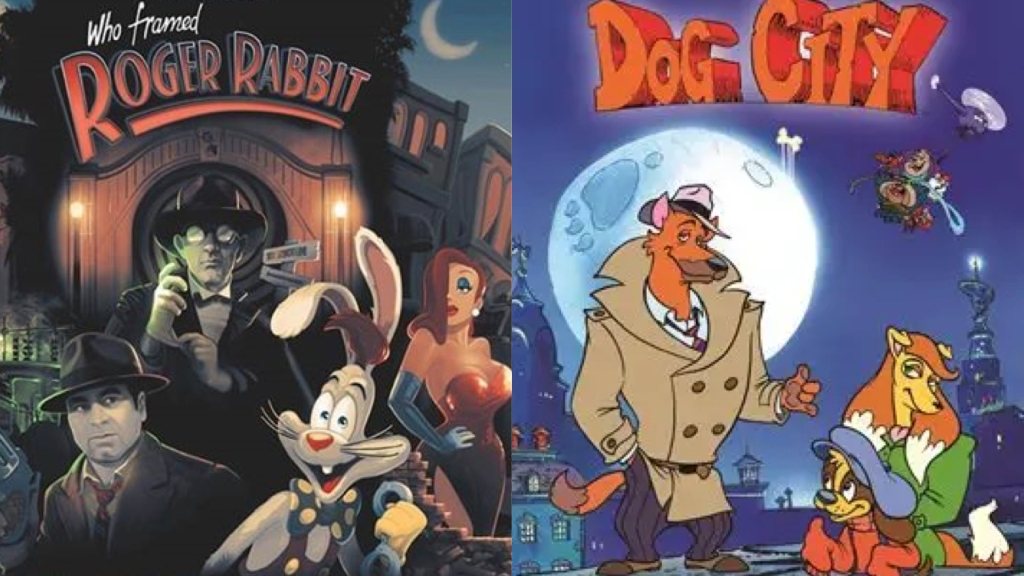

To prepare, I’ve been honing a quintessential father skill—storytelling. If my son inherits even a fraction of my tastes, he’ll soon develop a passion for film noir detective stories. And really, who can resist the allure of a hardboiled detective, a femme fatale, moody chiaroscuro lighting, and plot twists that leave you reeling? For the uninitiated, here’s a quick breakdown of the genre.

To sharpen my storytelling skills, I’ve decided to channel my inner noir writer and craft this final blog post—the opportunities for future work, as outlined in the Perspective—in that style.

Theft at the Quantum Frontier

Under the dim light of a flickering bulb, private investigator Max Kelvin leaned back in his creaky chair, nursing a cigarette. The steady patter of rain against the window was interrupted by the creak of the office door. In walked trouble. Trouble with a capital T.

She was tall, moving with a confident stride that barely masked the worry lines etched into her face. Her dark hair was pulled back in a tight bun, and her eyes were as sharp as the edges of the papers she clutched in her gloved hand.

“Mr. Kelvin?” she asked, her voice a low, smoky whisper.

“That’s what the sign says,” Max replied, taking a long drag of his cigarette, the ember glowing a fiery red. “What can I do for you, Miss…?”

“Doctor,” she corrected, her tone firm, “Shayna Majidy. I need your help. Someone’s about to scoop my research.”

Max’s eyebrows arched. “Scooped? You mean someone stole your work?”

“Yes,” Shayna said, frustration seeping into her voice. “I’ve been working on noncommuting charge physics, a topic recently highlighted in a Perspective article. But someone has stolen my paper. We need to find who did it before they send it to the local rag, The Ark Hive.”

Max leaned forward, snuffing out his cigarette and grabbing his coat in one smooth motion. “Alright, Dr. Majidy, let’s see where your work might have wandered off to.”

They started their investigation with Joey “The Ant” Guzman, an experimental physicist whose lab was a tangled maze of gleaming equipment. Superconducting qubits, quantum dots, ultracold atoms, quantum optics, and optomechanics cluttered the room, each device buzzing with the hum of cutting-edge science. Joey earned his nickname due to his meticulous and industrious nature, much like an ant in its colony.

Guzman was a prime suspect, Shayna had whispered as they approached. His experiments could validate the predictions of noncommuting charges. “The first test of noncommuting-charge thermodynamics was performed with trapped ions,” she explained, her voice low and tense. “But there’s a lot more to explore—decreased entropy production rates, increased entanglement, to name a couple. There are many platforms to test these results, and Guzman knows them all. It’s a major opportunity for future work.”

Guzman looked up from his work as they entered, his expression guarded. “Can I help you?” he asked, wiping his hands on a rag.

Max stepped forward, his eyes scanning the room. “A rag? I guess you really are a quantum mechanic.” He paused for laughter, but only silence answered. “We’re investigating some missing research,” he said, his voice calm but edged with intensity. “You wouldn’t happen to know anything about noncommuting charges, would you?”

Guzman’s eyes narrowed, a flicker of suspicion crossing his face. “Almost everyone is interested in that right now,” he replied cautiously.

Shayna stepped forward, her eyes boring into Guzman’s. “So what’s stopping you from doing experimental tests? Do you have enough qubits? Long enough decoherence times?”

Guzman shifted uncomfortably but kept his silence. Max took another drag of his cigarette, the smoke curling around his thoughts. “Alright, Guzman,” he said finally. “If you think of anything that might help, you know where to find us.”

As they left the lab, Max turned to Shayna. “He’s hiding something,” he said quietly. “But whether it’s your work or how noisy and intermediate scale his hardware is, we need more to go on.”

Shayna nodded, her face set in grim determination. The rain had stopped, but the storm was just beginning.

Their next stop was the dimly lit office of Alex “Last Piece” Lasek, a puzzle enthusiast with a sudden obsession with noncommuting charge physics. The room was a chaotic labyrinth, papers strewn haphazardly, each covered with intricate diagrams and cryptic scrawlings. The stale aroma of old coffee and ink permeated the air.

Lasek was hunched over his desk, scribbling furiously, his eyes darting across the page. He barely acknowledged their presence as they entered. “Noncommuting charges,” he muttered, his voice a gravelly whisper, “they present a fascinating puzzle. They hinder thermalization in some ways and enhance it in others.”

“Last Piece Lasek, I presume?” Max’s voice sliced through the dense silence.

Lasek blinked, finally lifting his gaze. “Yeah, that’s me,” he said, pushing his glasses up the bridge of his nose. “Who wants to know?”

“Max Kelvin, private eye,” Max replied, flicking his card onto the cluttered desk. “And this is Dr. Majidy. We’re investigating some missing research.”

Shayna stepped forward, her eyes sweeping the room like a hawk. “I’ve read your papers, Lasek,” she said, her tone a blend of admiration and suspicion. “You live for puzzles, and this one’s as tangled as they come. How do you plan to crack it?”

Lasek shrugged, leaning back in his creaky chair. “It’s a tough nut,” he admitted, a sly smile playing at his lips. “But I’m no thief, Dr. Majidy. I’m more interested in solving the puzzle than in academic glory.”

As they exited Lasek’s shadowy lair, Max turned to Shayna. “He’s a riddle wrapped in an enigma, but he doesn’t strike me as a thief.”

Shayna nodded, her expression grim. “Then we keep digging. Time’s slipping away, and we’ve got to find the missing pieces before it’s too late.”

Their third stop was the office of Billy “Brass Knuckles,” a classical physicist infamous for his no-nonsense attitude and a knack for punching holes in established theories.

Max’s skepticism was palpable as they entered the office. “He’s a classical physicist; why would he give a damn about noncommuting charges?” he asked Shayna, raising an eyebrow.

Billy, overhearing Max’s question, let out a gravelly chuckle. “It’s not as crazy as it sounds,” he said, his eyes glinting with amusement. “Sure, the noncommutation of observables is at the core of quantum quirks like uncertainty, measurement disturbances, and the Einstein-Podolsky-Rosen paradox.”

Max nodded slowly, “Go on.”

“However,” Billy continued, leaning forward, “classical mechanics also deals with quantities that don’t commute, like rotations around different axes. So, how unique is noncommuting-charge thermodynamics to the quantum realm? What parts of this new physics can we find in classical systems?”

Shayna crossed her arms, a devious smile playing on her lips. “Wouldn’t you like to know?”

“Wouldn’t we all?” Billy retorted, his grin mirroring hers. “But I’m about to retire. I’m not the one sneaking around your work.”

Max studied Billy for a moment longer, then nodded. “Alright, Brass Knuckles. Thanks for your time.”

As they stepped out of the shadowy office and into the damp night air, Shayna turned to Max. “Another dead end?”

Max nodded and lit a cigarette, the smoke curling into the misty air. “Seems so. But the clock’s ticking, and we can’t afford to stop now.”

Their fourth suspect, Tony “Munchies” Munsoni, was a specialist in chaos theory and thermodynamics, with an insatiable appetite for both science and snacks.

“Another non-quantum physicist?” Max muttered to Shayna, raising an eyebrow.

Shayna nodded, a glint of excitement in her eyes. “The most thrilling discoveries often happen at the crossroads of different fields.”

Dr. Munson looked up from his desk as they entered, setting aside his bag of chips with a wry smile. “I’ve read the Perspective article,” he said, getting straight to the point. “I agree—every chaotic or thermodynamic phenomenon deserves another look under the lens of noncommuting charges.”

Max leaned against the doorframe, studying Munsoni closely.

“We’ve seen how they shake up the Eigenstate Thermalization Hypothesis, monitored quantum circuits, fluctuation relations, and Page curves,” Munson continued, his eyes alight with intellectual fervour. “There’s so much more to uncover. Think about their impact on diffusion coefficients, transport relations, thermalization times, out-of-time-ordered correlators, operator spreading, and quantum-complexity growth.”

Shayna leaned in, clearly intrigued. “Which avenue do you think holds the most promise?”

Munsoni’s enthusiasm dimmed slightly, his expression turning regretful. “I’d love to dive into this, but I’m swamped with other projects right now. Give me a few months, and then you can start grilling me.”

Max glanced at Shayna, then back at Munsoni. “Alright, Munchies. If you hear anything or stumble upon any unusual findings, keep us in the loop.”

As they stepped back into the dimly lit hallway, Max turned to Shayna. “I saw his calendar; he’s telling the truth. His schedule is too packed to be stealing your work.”

Shayna’s shoulders slumped slightly. “Maybe. But we’re not done yet. The clock’s ticking, and we’ve got to keep moving.”

Finally, they turned to a pair of researchers dabbling in the peripheries of quantum thermodynamics. One was Twitch Uppity, an expert on non-Abelian gauge theories. The other, Jada LeShock, specialized in hydrodynamics and heavy-ion collisions.

Max leaned against the doorframe, his voice casual but probing. “What exactly are non-Abelian gauge theories?” he asked (setting up the exposition for the Quantum Frontiers reader’s benefit).

Uppity looked up, his eyes showing the weary patience of someone who had explained this concept countless times. “Imagine different particles interacting, like magnets and electric charges,” he began, his voice steady. “We describe the rules for these interactions using mathematical objects called ‘fields.’ These rules are called field theories. Electromagnetism is one example. Gauge theories are a class of field theories where the laws of physics are invariant under certain local transformations. This means that a gauge theory includes more degrees of freedom than the physical system it represents. We can choose a ‘gauge’ to eliminate the extra degrees of freedom, making the math simpler.”

Max nodded slowly, his eyes fixed on Uppity. “Go on.”

“These transformations form what is called a gauge group,” Uppity continued, taking a sip of his coffee. “Electromagnetism is described by the gauge group U(1). Other interactions are described by more complex gauge groups. For instance, quantum chromodynamics, or QCD, uses an SU(3) symmetry and describes the strong force between particles in an atom. QCD is a non-Abelian gauge theory because its gauge group is noncommutative. This leads to many intriguing effects.”

“I see the noncommuting part,” Max stated, trying to keep up. “But, what’s the connection to noncommuting charges in quantum thermodynamics?”

“That’s the golden question,” Shayna interjected, excitement in her voice. “In QCD, particle physics uses non-Abelian groups, so it may exhibit phenomena related to noncommuting charges in thermodynamics.”

“May is the keyword,” Uppity replied. “In QCD, the symmetry is local, unlike the global symmetries described in the Perspective. An open question is how much noncommuting-charge quantum thermodynamics applies to non-Abelian gauge theories.”

Max turned his gaze to Jada. “How about you? What are hydrodynamics and heavy-ion collisions?” he asked, setting up more exposition.

Jada dropped her pencil and raised her head. “Hydrodynamics is the study of fluid motion and the forces acting on them,” she began. “We focus on large-scale properties, assuming that even if the fluid isn’t in equilibrium as a whole, small regions within it are. Hydrodynamics can explain systems in condensed matter and stages of heavy-ion collisions—collisions between large atomic nuclei at high speeds.”

“Where does the non-Abelian part come in?” Max asked, his curiosity piqued.

“Hydrodynamics researchers have identified specific effects caused by non-Abelian symmetries,” Jada answered. “These include non-Abelian contributions to conductivity, effects on entropy currents, and shortening neutralization times in heavy-ion collisions.”

“Are you looking for more effects due to non-Abelian symmetries?” Shayna asked, her interest clear. “A long-standing question is how heavy-ion collisions thermalize. Maybe the non-Abelian ETH would help explain this?”

Jada nodded, a faint smile playing on her lips. “That’s the hope. But as with all cutting-edge research, the answers are elusive.”

Max glanced at Shayna, his eyes thoughtful. “Let’s wrap this up. We’ve got some thinking to do.”

After hearing from each researcher, Max and Shayna found themselves back at the office. The dim light of the flickering bulb cast long shadows on the walls. Max poured himself a drink. He offered one to Shayna, who declined, her eyes darting around the room, betraying her nerves.

“So,” Max said, leaning back in his chair, the creak of the wood echoing in the silence. “Everyone seems to be minding their own business. Well…” Max paused, taking a slow sip of his drink, “almost everyone.”

Shayna’s eyes widened, a flicker of panic crossing her face. “I’m not sure who you’re referring to,” she said, her voice wavering slightly. “Did you figure out who stole my work?” She took a seat, her discomfort apparent.

Max stood up and began circling Shayna’s chair like a predator stalking its prey. His eyes were sharp, scrutinizing her every move. “I couldn’t help but notice all the questions you were asking and your eyes peeking onto their desks.”

Shayna sighed, her confident façade cracking under the pressure. “You’re good, Max. Too good… No one stole my work.” Shayna looked down, her voice barely above a whisper. “I read that Perspective article. It mentioned all these promising research avenues. I wanted to see what others were working on so I could get a jump on them.”

Max shook his head, a wry smile playing on his lips. “You tried to scoop the scoopers, huh?”

Shayna nodded, looking somewhat sheepish. “I guess I got a bit carried away.”

Max chuckled, pouring himself another drink. “Science is a tough game, Dr. Majidy. Just make sure next time you play fair.”

As Shayna left the office, Max watched the rain continue to fall outside. His thoughts lingered on the strange case, a world where the race for discovery was cutthroat and unforgiving. But even in the darkest corners of competition, integrity was a prize worth keeping…

That concludes my four-part series on our recent Perspective article. I hope you had as much fun reading them as I did writing them.